- 首页 > 人文 > >

tensorflow2.0教程与操作示例( 二 )

示例代码

import tensorflow as tfprint(tf.__version__)# 设定cpu或者gputf.debugging.set_log_device_placement (True)cpus = tf.config.list_physical_devices(device_type='CPU')print('cpus',cpus)tf.config.set_visible_devices (cpus)A = tf.constant([[1,2],[3,4]])print('A',A,type(A),A.numpy(),dir(A))B = tf.constant([[5,6],[7,8]])C = tf.matmul(A,B)print(C,type(C),C.shape,vars(C))# 临时指定cpu或者gpuwith tf.device('/cpu:0'):A = tf.constant([[1,2],[3,4]])B = tf.constant([[5,6],[7,8]])C = tf.matmul(A,B)print(C,type(C),C.shape,C.device)# 张量,标量随机数random_float = tf.random.uniform(shape=())print('random_float',random_float)# 定义一个有2个元素的零向量zero_vector = tf.zeros(shape=(2))print('zero_vector',zero_vector)# 自动求导机制x = tf.Variable(initial_value=http://kandian.youth.cn/index/3.)with tf.GradientTape() as tape:y = tf.square(x)y_grad = tape.gradient(y,x)print('y_grad',y_grad)print('tape',tape,type(tape))X = tf.constant([[1.,2.],[3.,4.]])y = tf.constant([[1.],[2.]])w = tf.Variable(initial_value=http://kandian.youth.cn/index/[[1.],[2.]])b = tf.Variable(initial_value=1.)with tf.GradientTape() as tape:L = tf.reduce_sum(tf.square(tf.matmul(X, w) + b - y))w_grad,b_grad = tape.gradient(L,[w,b])print(w_grad,b_grad)tensorflow2线性模型步骤

- 使用 tf.keras.datasets 获得数据集并预处理

- 使用 tf.keras.Model 和 tf.keras.layers 构建模型

- 构建模型训练流程 , 使用 tf.keras.losses 计算损失函数 , 并使用 tf.keras.optimizer 优化模型

- 构建模型评估流程 , 使用 tf.keras.metrics 计算评估指标

源代码

# Dataimport numpy as npimport tensorflow as tf# dataX_raw = np.array([2013, 2014, 2015, 2016, 2017], dtype=np.float32)y_raw = np.array([12000, 14000, 15000, 16500, 17500], dtype=np.float32)# 归一化X = (X_raw - X_raw.min()) / (X_raw.max() - X_raw.min())y = (y_raw - y_raw.min()) / (y_raw.max() - y_raw.min())X = tf.constant(X)y = tf.constant(y)a = tf.Variable(initial_value=http://kandian.youth.cn/index/0.)b = tf.Variable(initial_value=0.)variables = [a, b]num_epoch = 10000optimizer = tf.keras.optimizers.SGD(learning_rate=5e-4)for e in range(num_epoch):# 使用tf.GradientTape()记录损失函数的梯度信息with tf.GradientTape() as tape:y_pred = a * X + bloss = tf.reduce_sum(tf.square(y_pred - y))# TensorFlow自动计算损失函数关于自变量(模型参数)的梯度grads = tape.gradient(loss, variables)# TensorFlow自动根据梯度更新参数optimizer.apply_gradients(grads_and_vars=zip(grads, variables))print(a, b)# 方法2 kerasX = tf.constant([[1.0, 2.0, 3.0], [4.0, 5.0, 6.0]])y = tf.constant([[10.0], [20.0]])class Linear(tf.keras.Model):def __init__(self,):super().__init__()self.dense = tf.keras.layers.Dense(units=1,use_bias=True,activation=tf.nn.relu,kernel_regularizer=tf.zeros_initializer(),bias_regularizer=tf.zeros_initializer)def call(self,input):output = self.dense(input)return outputmodel = Linear()optimizer = tf.keras.optimizers.SGD(learning_rate=0.01)for i in range(100):with tf.GradientTape() as tape:y_pred = model(X)loss = tf.reduce_mean(tf.square(y_pred-y))grads = tape.gradient(loss,model.variables)optimizer.apply_gradients(grads_and_vars=zip(grads,model.variables))print(model.variables)# 模型的评估sparse_categorical_accuracy = tf.keras.metrics.SparseCategoricalAccuracy()num_batches = int(data_loader.num_test_data // batch_size)for batch_index in range(num_batches):start_index, end_index = batch_index * batch_size, (batch_index + 1) * batch_sizey_pred = model.predict(data_loader.test_data[start_index: end_index])sparse_categorical_accuracy.update_state(y_true=data_loader.test_label[start_index: end_index], y_pred=y_pred)print("test accuracy: %f" % sparse_categorical_accuracy.result())

推荐阅读

-

央视新闻|49岁眼科女医生去世,将眼角膜和遗体分别捐献给医院和大学

-

-

安东尼|美国女子陈尸家中两年死因成谜,四子女同屋照常生活

-

猛龙|30+11+8三分!范弗里特打疯了,真对不起,小卡真不是单核夺冠

-

-

央视新闻客户端|服务贸易到底是啥?它和日常生活有啥联系?一文看懂:服贸就在我们身边

-

周迅|48岁周迅怎么还那么嫩!庆生照穿衬衫古灵精怪美成少女,状态超好

-

-

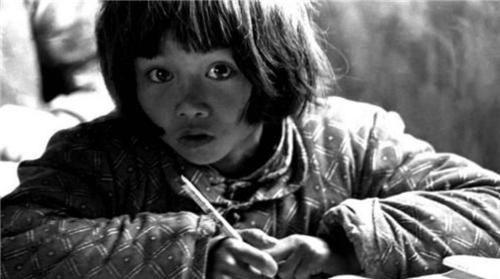

29年前,那位大喊“我要读书”的大眼睛女孩苏明娟,如今成了这样

-

-

德国党卫军跟国防军区别,二战时期德国国防军跟党卫军有什么区别-

-

阿里巴巴从蒋凡事件谈阿里巴巴合伙人制度设计:股权激励费用数目惊人

-

小丑的娱乐|萧亚轩男友累到吐血就医:天后表示非常的自责,富婆真是不好伺候

-

经济|新闻观察 | 各地项目集中开工支撑基建投资保持高位 投资需求加速释放

-

zol中关村在线首款支持5G+Wi-Fi 6平板官宣 四等边框设计爱了

-

美国今年近2.7万人因枪死亡|美国今年近2.7万人因枪死亡 具体是什么情况?

-

[生肖]家有此3大生肖,贵人助力,富足旺财,6月下旬,职场风生水起

-

南充新闻网|白俄罗斯代表46国在联合国人权理事会作共同发言支持中国在涉疆问题上的立场

-

鼎盛军事|配备中国造14.5重机枪,威力十分强悍,伊朗自产防雷车已大量服役

-

![[生肖]家有此3大生肖,贵人助力,富足旺财,6月下旬,职场风生水起](http://img88.010lm.com/img.php?https://image.uc.cn/s/wemedia/s/2020/6713007d388380cdc720a75fc79f7b37.jpg)